6 delivers on its promise, delivering speed-ups of 50-60% in large model training jobs with just a handful of new lines of code. Authors: Suraj Subramanian, Seth Juarez, Cassie Breviu, Dmitry Soshnikov, Ari Bornstein. Cloud SQL Fully managed database for MySQL, PostgreSQL, and SQL Server. In DALI, any data processing task has a central object called Pipeline. EDIT: If you're not using Dask, you can simply use cudf. If it takes less than 12 GB RAM, then you are good. GPU accelerated prediction is enabled by default for the above mentioned tree_method parameters but can be switched to CPU prediction by setting predictor to cpu_predictor. CUDA - Memories - Apart from the device DRAM, CUDA supports several additional types of memory that can be used to increase the CGMA ratio for a To overcome this problem, several low-capacity, high-bandwidth memories, both on-chip and off-chip are present on a CUDA GPU. C++ queries related to “cuda kernel extern shared memory” cuda kernel extern shared memory More “Kinda” Related C++ Answers View All C++ Answers » CUDA kernel errors might be asynchronously reported at some other API call,so the stacktrace below m 博客园 首页 新随笔 联系 订阅 管理 *** RuntimeError: CUDA error: out of memory. The unreferenced memory is the memory that is inaccessible and can not be used. Below cell will mount the Google Drive to Google Colab. For training the state-of-the-art or SOTA models, GPU is a big necessity.

The price was reduced from $700 to $550 when 1080 Ti was introduced. 8 I got the same problem when I start the training test as described with Kaggle images in GTX1080i, batch_size can only be 1, the machine crashes when I set batch_size to 2.A typical solution is to implement pre-allocation.

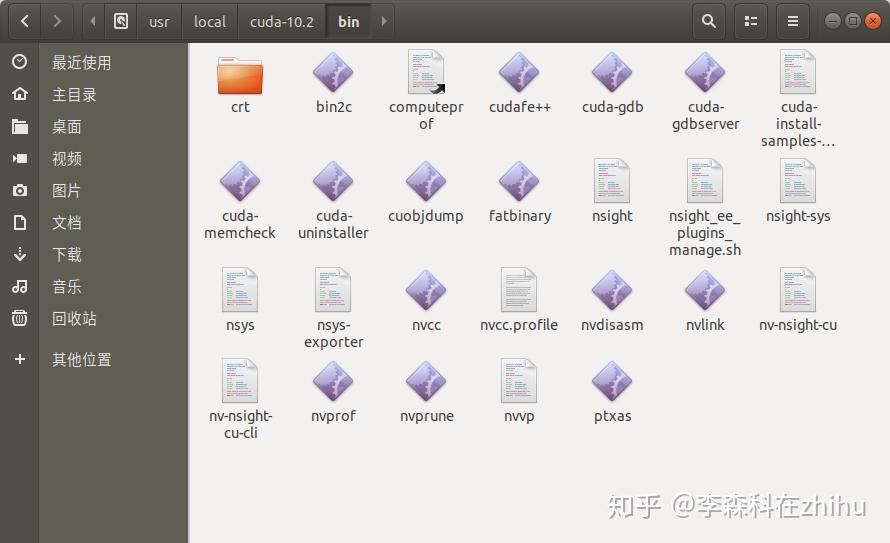

What happens when install cuda toolkit ubuntu free#

(SD) card that has at least 4 GB of free memory. Try it on your code! First, we will explore the Satellite Image Classification from Kaggle that we will use in this tutorial. If you have an Nvidia 730 setting somewhere collecting dust, definitely go ahead and use it because for smaller networks, especially ... 4. TensorFlow is an end-to-end open source platform for machine learning. ? However, as we’ll see in a computer vision Watch the processes using GPU (s) and the current state of your GPU (s): watch -n 1 nvidia-smi. Built on NVIDIA ® CUDA-X AI ™, RAPIDS unites years of development ... In Linux, we used the free -h command to output the amount of used and cached memory. nouveauグラフィックスドライバを無効化した後 ... Dive-into-DL-PyTorch With Tensor Cores, we go a step further: We take each tile and load a part of these tiles into Tensor Cores. 0 introduced this possibility with the NVRTC library. Differently from CUDA, the kernel compilation phase of OpenCL is performed at runtime. I ran your model on Kaggle with a batch_size = 48 and attached a screenshot of the requirements. Answer: Yes it is possible to run tensorflow on AMD GPU's but it will not be that great though. CUDA_ERROR_OUT_OF_MEMORY: out of memory on Nvidia Quadro 8000, with more than enough available memory. This lesson is about multi-label prediction, and we’re starting off with the Planet Amazon dataset from Kaggle. memory_summary(device=None, abbreviated=False) wherein, both the arguments are optional. Some suggestions for working with limited GPU memory: You can reduce the batch size. 24 GiB reserved in total by PyTorch) If reserved memory is > allocated memory try setting max_split_size_mb to avoid fragmentation. As these transformations often work only on images, we would need to transform the numpy arrays into images first, augment the data, and finally transform them to tensors. 虚 … Well, if you are a deep learning/machine learning person, then you must know about Kaggle. Thank you, but If we need to use rjags or R2jags packages, we must install JAGS language. Cloud Spanner Cloud-native relational database with unlimited scale and 99. I would say you could easily train your model with the 30+ hrs Kaggle gives.

3B and 125M param models and I get the same result with … The Butterfly Image Classification Dataset. Note that this is memory usage for everything your user is running through Add the CUDA®, CUPTI, and cuDNN installation directories to the %PATH% environmental variable. To free the data, just pass the pointer to cudaFree ().